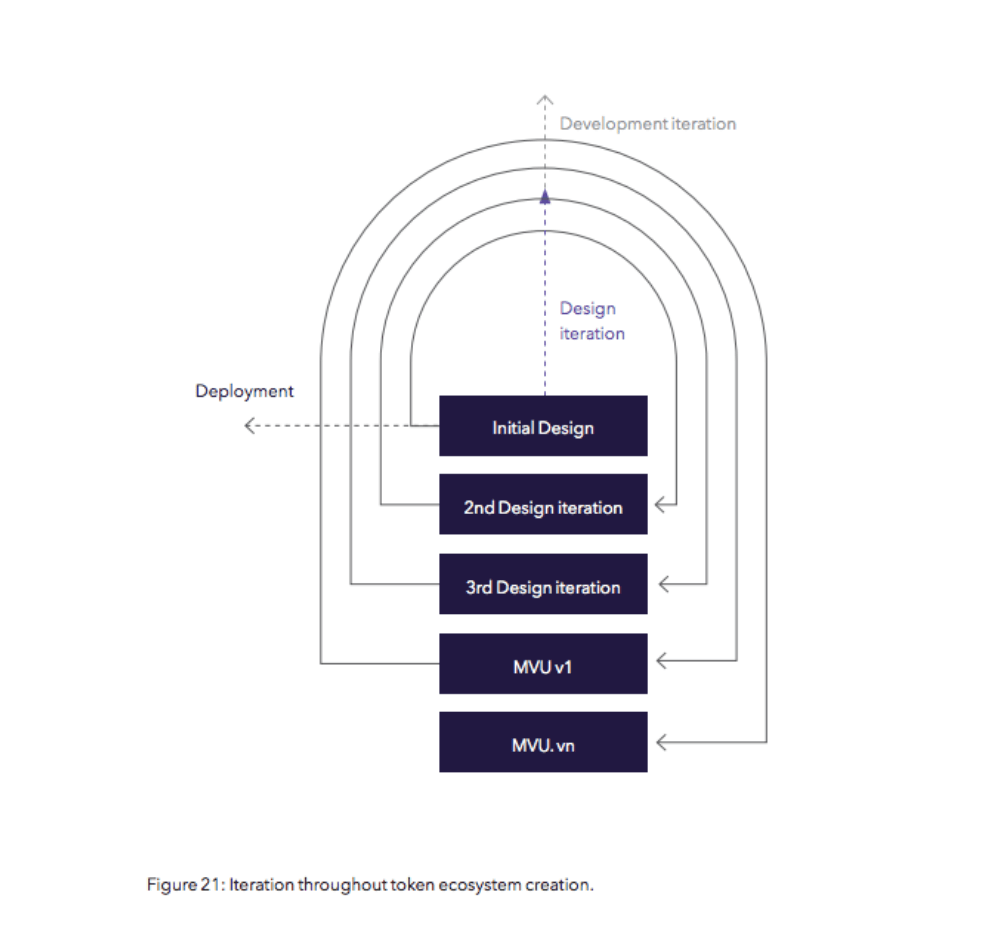

Deployment is focused on system integration and continued model optimisation through testing and iteration. Deploying the token model is a continuous process, involving two distinct layers of iteration, on design and development. The deployment phase comprises of validating and stress testing the parameters that were defined during the design phase, before fully integrating into the network.

The deployment process involves using a combination of mathematical, computer science and engineering principles to fully understand the interactions in our network and its overall health. This consists of a sequential integration phase that requires testing of each sub-system before being integrated. MVT for our ecosystem, which was established in the design phase, will need to be improved upon once integrated into the network. This process consists of first validating key assumptions and iteratively testing through until all parameters have been optimised with respect to their constraints.

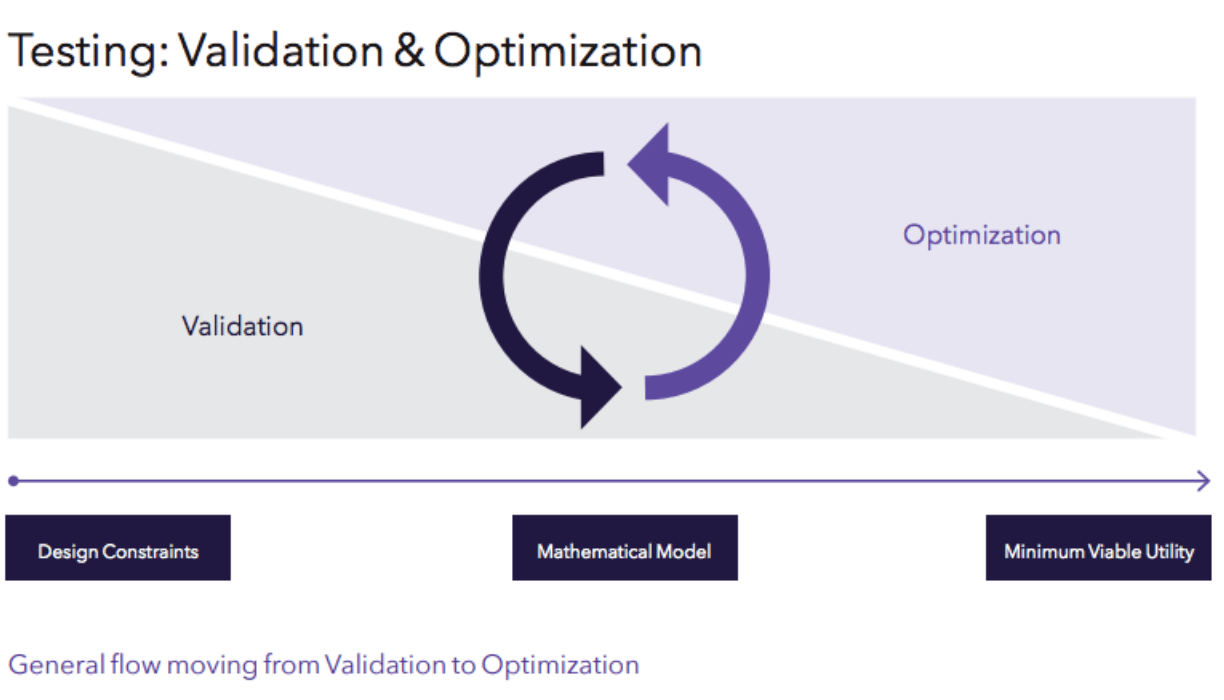

The initial token design is still an untested hypothesis; therefore, once finalised, it needs to be tested. Testing needs to be an integral part of any token design to not just create the optimal design, but the optimal feedback loop that helps govern and monitor the system.

The initial token design is still an untested hypothesis; therefore, once finalised, it needs to be tested. Testing needs to be an integral part of any token design to not just create the optimal design, but the optimal feedback loop that helps govern and monitor the system.

Case Study: Aragon Governance

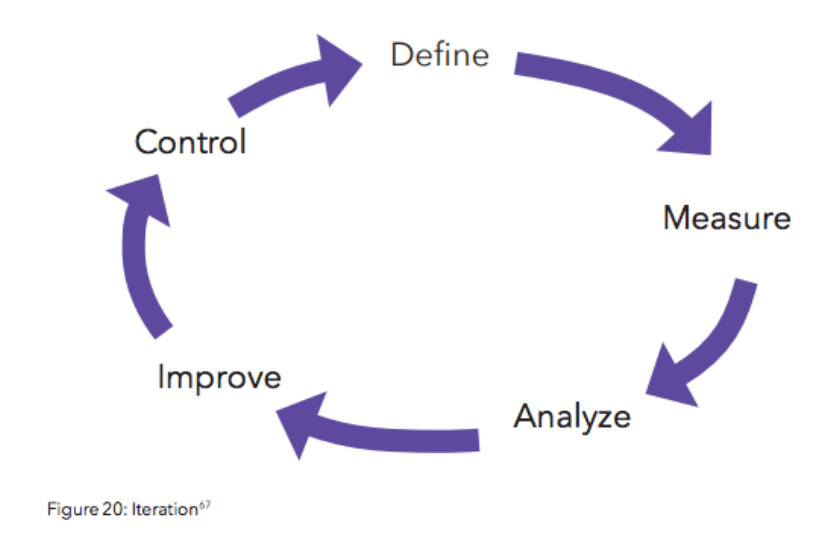

Aragon’s governance process, specifically the voting system incorporated into the Aragon Governance Proposal (AGP) process is a great example of an untested hypothesis that the team is currently testing live. At launch, votes on AGPs are token weighted, meaning 1 ANT = 1 vote (more specifically 10^-18 ANT = 1 vote), and voting times were fixed. Implications of voting systems is beyond the scope of this article, but for a great breakdown of some of the implications of this voting system check out Evan Van Ness’s post examing the results of AGP42 where he shows how a token whale gamed the system and was able to cause the rejection of this proposal at the last minute despite having the Aragon community overall voting for it. What’s important to note here is that this voting system employed by Aragon was a very simple one, and this was likely an intentional design choice. By deploying a super simple design, Aragon was able to set a baseline test, learn from this mechanism, and very likely is incorporating these learnings into future iterations of their voting system.

The continuous and complex nature of these networks means that they will always be in a state of evolution, and thus the token model needs to be able to evolve in step with the needs of the network, with the help of optimal feedback loops. Blockchains are great economic sensors, providing provenance of information not just on the current state of the network, but its evolutionary path. Due to the effectiveness of these networks in recording and preserving details on their current state, the question is not what can be measured, but what should be measured to determine the true state of network health. The answer to that question should be guided by the high-level network requirements outlined in the Discovery Phase, and the network’s objective function defined in the Design Phase.

Real-world tests are the best tool for validating and learning from your design, but what if the risks of a real-world test could be catastrophic for a network? Many networks are positioned as global public utilities so testing key parameters live could mean disaster for networks where safety is key. You wouldn’t want to stress test a bridge after you built it.

Real-world tests are the best tool for validating and learning from your design, but what if the risks of a real-world test could be catastrophic for a network? Many networks are positioned as global public utilities so testing key parameters live could mean disaster for networks where safety is key. You wouldn’t want to stress test a bridge after you built it.

Getting to the optimal MVT without relying on live tests requires applying principles from systems engineering and control theory throughout the testing and implementation phase to achieve the optimal incentive structure and effective production-ready model. Recently BlockScience, has announced an awesome tool they developed in house for “complex adaptive dynamic computer-aided design”, named cadCAD. cadCAD,is an open-sourced python library designed to assist in the validation and optimisation of token designs using simulations. It is a major contribution to the tooling in the space and one I’m super excited about.

In much the same way the laws of physics are the primitives for classical engineering problems, such as building skyscrapers or bridges, the economic theories and incentives that guided the initial design are the primitives used in token engineering. If ‘Token Design’ is the primary discipline of the Discovery and Design Phases, then ‘Token Engineering’ is the primary discipline of the Deployment Phase.

The same way we iterate the design through to a production-ready model, testing should be iterated through to a production-ready feedback loop. These feedback loops are key in monitoring the health of the network and enable token models to be agile and make real-time adjustments. Token models are more sustainable if they are able to react and optimise based on little shocks in the network, which is commonly referred to as anti-fragile. If these little network shocks go undetected or the model simply does not adjust accordingly, then inefficiencies will build up and the risk of a much larger, destabilising shock increase, which risks the viability of the network. Again, the key is designing for network safety.

The Deployment Phase is as much about testing and it is deploying your token design. A primary aim for any token design should be safety, and to achieve this the same engineering rigour put into building bridges and skyscrapers should be applied to tokenised ecosystems. To fill this gap, the new field of ‘Token Engineering’ has recently emerged and is already starting to build out the tooling required for more robust designs. But this is only the beginning.

The Deployment Phase is as much about testing and it is deploying your token design. A primary aim for any token design should be safety, and to achieve this the same engineering rigour put into building bridges and skyscrapers should be applied to tokenised ecosystems. To fill this gap, the new field of ‘Token Engineering’ has recently emerged and is already starting to build out the tooling required for more robust designs. But this is only the beginning.

To learn more about our phased strategic process for token ecosystem creation, download our full report on Token Ecosystem Creation.

This article is for information purposes only and does not constitute investment advice. This article does not amount to an invitation or inducement to buy or sell an investment nor does it solicit any such offer or invitation in any jurisdiction.

In all cases, readers should conduct their own investigation and analysis of the data in the article. All statements of opinion and/or belief contained in this article and all views expressed and all projections, forecasts or statements relating to expectations regarding future events represent Outlier Ventures Operation Limited own assessment and interpretation of information available as at the date of this article.

No responsibility or liability is accepted by Outlier Ventures Operations Limited or Sapia Partners LLP for reliance on the contents of this article.

Outlier Ventures is an Appointed Representative of Sapia Partners LLP, a firm authorised and regulated by the Financial Conduct Authority (FCA).